Multiclass classification using a perceptron#

The Softmax function is also known as the normalized exponential function. It normalizes the input into a probability distribution that sums to 1.

1raise SystemExit("Stop right there!");

An exception has occurred, use %tb to see the full traceback.

SystemExit: Stop right there!

Importing libraries and packages#

1# System

2import os

3

4# Mathematical operations and data manipulation

5import pandas as pd

6from pandas import get_dummies

7

8# Modelling

9import tensorflow as tf

10

11# Statistics

12from sklearn.metrics import accuracy_score

13

14# Plotting

15import matplotlib.pyplot as plt

16

17%matplotlib inline

1os.environ["TF_CPP_MIN_LOG_LEVEL"] = "2"

Set paths#

1# Path to datasets directory

2data_path = "./datasets"

3# Path to assets directory (for saving results to)

4assets_path = "./assets"

Loading dataset#

1dataset = pd.read_csv(f"{data_path}/iris.csv")

1dataset.head()

| petallength | petalwidth | sepallength | sepalwidth | species | |

|---|---|---|---|---|---|

| 0 | 5.1 | 3.5 | 1.4 | 0.2 | 0 |

| 1 | 4.9 | 3.0 | 1.4 | 0.2 | 0 |

| 2 | 4.7 | 3.2 | 1.3 | 0.2 | 0 |

| 3 | 4.6 | 3.1 | 1.5 | 0.2 | 0 |

| 4 | 5.0 | 3.6 | 1.4 | 0.2 | 0 |

Exploring dataset#

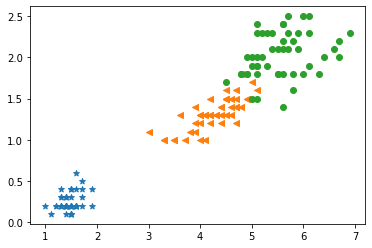

1plt.scatter(

2 dataset[dataset["species"] == 0]["sepallength"],

3 dataset[dataset["species"] == 0]["sepalwidth"],

4 marker="*",

5)

6plt.scatter(

7 dataset[dataset["species"] == 1]["sepallength"],

8 dataset[dataset["species"] == 1]["sepalwidth"],

9 marker="<",

10)

11plt.scatter(

12 dataset[dataset["species"] == 2]["sepallength"],

13 dataset[dataset["species"] == 2]["sepalwidth"],

14 marker="o",

15)

<matplotlib.collections.PathCollection at 0x7fd443e5aee0>

Training of the network#

1# Split into features and labels. Convert values at the end into

2# matrix format

3x = dataset[["petallength", "petalwidth", "sepallength", "sepalwidth"]].values

4y = dataset["species"].values

5y = get_dummies(y)

6y = y.values

1# Creating TensorFlow variables for features and labels and

2# typecasting them to float

3x = tf.Variable(x, dtype=tf.float32)

1# Training of the perceptron

2Number_of_features = 4

3Number_of_units = 3

4

5# Weights and bias

6weight = tf.Variable(tf.zeros([Number_of_features, Number_of_units]))

7bias = tf.Variable(tf.zeros([Number_of_units]))

8

9# Optimizer

10optimizer = tf.optimizers.Adam(0.01)

1def perceptron(x):

2 z = tf.add(tf.matmul(x, weight), bias)

3 output = tf.nn.softmax(z)

4 return output

5

6

7def train(i):

8 for n in range(i):

9 loss = lambda: abs(

10 tf.reduce_mean(

11 tf.nn.softmax_cross_entropy_with_logits(

12 labels=y, logits=perceptron(x)

13 )

14 )

15 )

16 optimizer.minimize(loss, [weight, bias])

17

18

19# Train the network

20train(1000)

Statistics#

1tf.print(weight)

[[0.684310615 0.895632923 -1.01323473]

[2.64246488 -1.13437688 -3.20665288]

[-2.96634197 -0.129377589 3.2572844]

[-2.97383857 -3.13501596 3.23136568]]

1tf.print(bias)

[2.72811317 5.23916721 -3.98247242]

1# Passing the input data to check whether the perceptron

2# classifies it correctly

3ypred = perceptron(x)

4# Rounding off the output to convert it into binary format

5ypred = tf.round(ypred)

1# Measuring the accuracy

2acc = accuracy_score(y, ypred)

3print(acc)

0.98