Apple stock price prediction#

Predicting the next day’s stock price based on historical prices using a cleaned-up version of Apple’s historical stock data sourced from the Nasdaq website.

1raise SystemExit("Stop right there!");

An exception has occurred, use %tb to see the full traceback.

SystemExit: Stop right there!

Importing libraries and packages#

1# System

2import os

3

4# Mathematical operations and data manipulation

5import numpy as np

6import pandas as pd

7import math

8

9# Modelling

10from sklearn.preprocessing import MinMaxScaler

11import tensorflow as tf

12import keras

13from tensorflow.keras import layers

14

15# Plotting

16import matplotlib.pyplot as plt

17from IPython.display import display, HTML

18

19%matplotlib inline

20display(HTML("<style>.container {width:80% !important;}</style>"))

21

22print("Tensorflow version:", tf.__version__)

23print("Keras version:", keras.__version__)

Tensorflow version: 2.4.1

Keras version: 2.4.3

1os.environ["TF_CPP_MIN_LOG_LEVEL"] = "2"

Set paths#

1# Path to datasets directory

2data_path = "./datasets"

3# Path to assets directory (for saving results to)

4assets_path = "./assets"

Loading dataset#

1dataset = pd.read_csv(f"{data_path}/AAPL.csv")

1dataset.head()

| Date | Close | Open | High | Low | Volume | |

|---|---|---|---|---|---|---|

| 0 | 1/17/2020 | 138.31 | 136.54 | 138.330 | 136.16 | 5623336 |

| 1 | 1/16/2020 | 137.98 | 137.32 | 138.190 | 137.01 | 4320911 |

| 2 | 1/15/2020 | 136.62 | 136.00 | 138.055 | 135.71 | 4045952 |

| 3 | 1/14/2020 | 135.82 | 136.28 | 137.139 | 135.55 | 3683458 |

| 4 | 1/13/2020 | 136.60 | 135.48 | 136.640 | 135.07 | 3531572 |

1dataset.tail()

| Date | Close | Open | High | Low | Volume | |

|---|---|---|---|---|---|---|

| 2509 | 1/29/2010 | 122.39 | 124.32 | 125.000 | 121.90 | 11571890 |

| 2510 | 1/28/2010 | 123.75 | 127.03 | 127.040 | 123.05 | 9616132 |

| 2511 | 1/27/2010 | 126.33 | 125.82 | 126.960 | 125.04 | 8719147 |

| 2512 | 1/26/2010 | 125.75 | 125.92 | 127.750 | 125.41 | 7135190 |

| 2513 | 1/25/2010 | 126.12 | 126.33 | 126.895 | 125.71 | 5738455 |

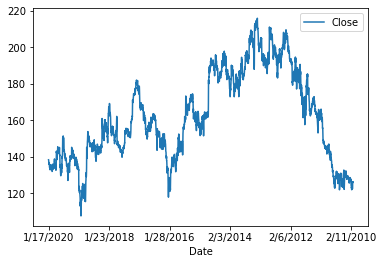

Exploring dataset#

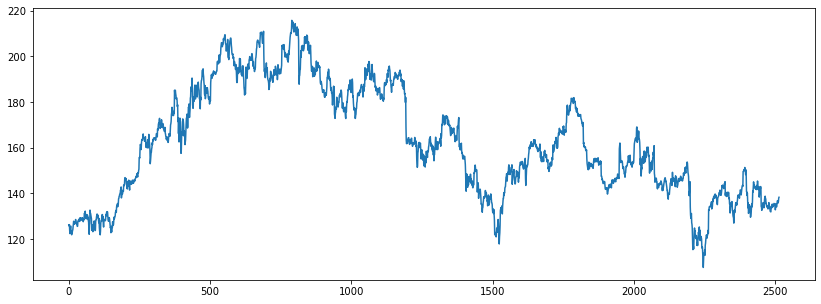

1dataset.plot("Date", "Close")

2plt.show()

1# Reversing the data for convenience of plotting and handling

2dataset = dataset.sort_index(ascending=False)

1# Extracting values for ‘Close’ from the dataframe as a numpy array.

2ts_data = dataset.Close.values.reshape(-1, 1)

1plt.figure(figsize=(14, 5))

2plt.plot(ts_data)

3plt.show()

Preparing the data#

1# Preparing the data for stock price prediction

2train_recs = int(len(ts_data) * 0.75)

3

4train_data = ts_data[:train_recs]

5test_data = ts_data[train_recs:]

6

7len(train_data), len(test_data)

(1885, 629)

1# Scaling

2scaler = MinMaxScaler()

3train_scaled = scaler.fit_transform(train_data)

4test_scaled = scaler.transform(test_data)

1def get_lookback(inp, look_back):

2 y = pd.DataFrame(inp)

3 dataX = [y.shift(i) for i in range(1, look_back + 1)]

4 dataX = pd.concat(dataX, axis=1)

5 dataX.fillna(0, inplace=True)

6 return dataX.values, y.values

1look_back = 10

2trainX, trainY = get_lookback(train_scaled, look_back=look_back)

3testX, testY = get_lookback(test_scaled, look_back=look_back)

1trainX.shape, testX.shape

((1885, 10), (629, 10))

Base RNN model#

1# Training base model (requires numpy=1.19,

2# see https://github.com/tensorflow/models/issues/9706)

3model = tf.keras.Sequential(

4 [

5 layers.Reshape((look_back, 1), input_shape=(look_back,)),

6 layers.SimpleRNN(32, input_shape=(look_back, 1)),

7 layers.Dense(1),

8 layers.Activation("linear"),

9 ]

10)

1model.compile(loss="mean_squared_error", optimizer="adam")

1model.summary()

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

reshape (Reshape) (None, 10, 1) 0

_________________________________________________________________

simple_rnn (SimpleRNN) (None, 32) 1088

_________________________________________________________________

dense (Dense) (None, 1) 33

_________________________________________________________________

activation (Activation) (None, 1) 0

=================================================================

Total params: 1,121

Trainable params: 1,121

Non-trainable params: 0

_________________________________________________________________

1model.fit(

2 trainX, trainY, epochs=3, batch_size=1, verbose=2, validation_split=0.1

3)

Epoch 1/3

1696/1696 - 13s - loss: 0.0084 - val_loss: 0.0010

Epoch 2/3

1696/1696 - 14s - loss: 0.0011 - val_loss: 8.3796e-04

Epoch 3/3

1696/1696 - 15s - loss: 9.4386e-04 - val_loss: 3.3792e-04

<tensorflow.python.keras.callbacks.History at 0x7fb3b9fd5c70>

1def calculate_performance(model_obj):

2

3 score_train = model_obj.evaluate(trainX, trainY, verbose=0)

4 print("Train RMSE: %.2f RMSE" % (math.sqrt(score_train)))

5

6 score_test = model_obj.evaluate(testX, testY, verbose=0)

7 print("Test RMSE: %.2f RMSE" % (math.sqrt(score_test)))

8

9

10calculate_performance(model)

Train RMSE: 0.03 RMSE

Test RMSE: 0.03 RMSE

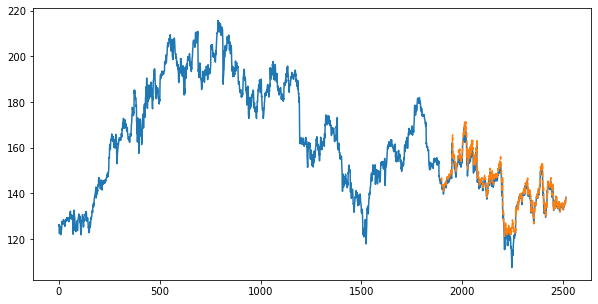

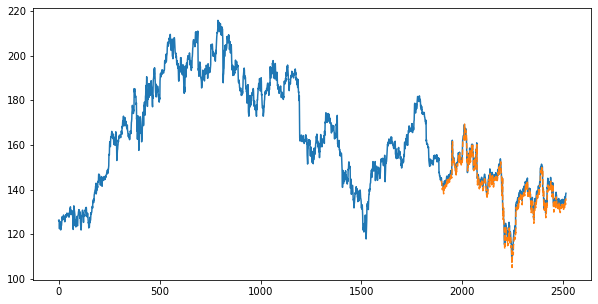

1def plot_prediction(model_obj):

2 testPredict = scaler.inverse_transform(model_obj.predict(testX))

3

4 pred_test_plot = ts_data.copy()

5 pred_test_plot[: train_recs + look_back, :] = np.nan

6 pred_test_plot[train_recs + look_back :, :] = testPredict[look_back:]

7

8 plt.plot(ts_data)

9 plt.plot(pred_test_plot, "--")

10

11

12plt.figure(figsize=(10, 5))

13plot_prediction(model)

1D convolution-based model#

1# Training 1D convolution-based model

2model_conv = tf.keras.Sequential(

3 [

4 layers.Reshape((look_back, 1), input_shape=(look_back,)),

5 layers.Conv1D(5, 5, activation="relu"),

6 layers.MaxPooling1D(5),

7 layers.Flatten(),

8 layers.Dense(1),

9 layers.Activation("linear"),

10 ]

11)

1model_conv.compile(loss="mean_squared_error", optimizer="adam")

1model_conv.summary()

Model: "sequential_1"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

reshape_1 (Reshape) (None, 10, 1) 0

_________________________________________________________________

conv1d (Conv1D) (None, 6, 5) 30

_________________________________________________________________

max_pooling1d (MaxPooling1D) (None, 1, 5) 0

_________________________________________________________________

flatten (Flatten) (None, 5) 0

_________________________________________________________________

dense_1 (Dense) (None, 1) 6

_________________________________________________________________

activation_1 (Activation) (None, 1) 0

=================================================================

Total params: 36

Trainable params: 36

Non-trainable params: 0

_________________________________________________________________

1model_conv.fit(

2 trainX, trainY, epochs=5, batch_size=1, verbose=2, validation_split=0.1

3)

Epoch 1/5

1696/1696 - 4s - loss: 0.0128 - val_loss: 0.0011

Epoch 2/5

1696/1696 - 4s - loss: 0.0017 - val_loss: 9.8598e-04

Epoch 3/5

1696/1696 - 4s - loss: 0.0016 - val_loss: 7.6665e-04

Epoch 4/5

1696/1696 - 5s - loss: 0.0015 - val_loss: 9.4015e-04

Epoch 5/5

1696/1696 - 4s - loss: 0.0014 - val_loss: 0.0011

<tensorflow.python.keras.callbacks.History at 0x7fb3800e2340>

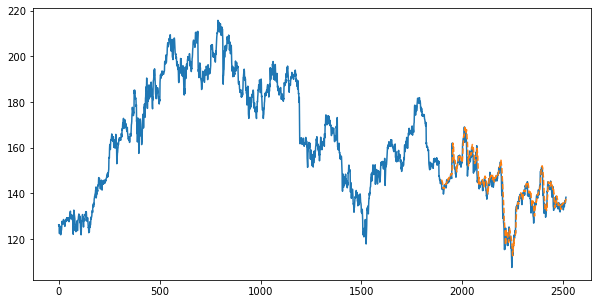

1calculate_performance(model_conv)

Train RMSE: 0.04 RMSE

Test RMSE: 0.04 RMSE

1plt.figure(figsize=(10, 5))

2plot_prediction(model_conv)

Hybrid model#

1# Training a hybrid (1D conv + RNN) model

2model_hybrid = tf.keras.Sequential(

3 [

4 layers.Reshape((look_back, 1), input_shape=(look_back,)),

5 layers.Conv1D(5, 3, activation="relu"),

6 layers.SimpleRNN(32),

7 layers.Dense(1),

8 layers.Activation("linear"),

9 ]

10)

1model_hybrid.compile(loss="mean_squared_error", optimizer="adam")

1model_hybrid.summary()

Model: "sequential_2"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

reshape_2 (Reshape) (None, 10, 1) 0

_________________________________________________________________

conv1d_1 (Conv1D) (None, 8, 5) 20

_________________________________________________________________

simple_rnn_1 (SimpleRNN) (None, 32) 1216

_________________________________________________________________

dense_2 (Dense) (None, 1) 33

_________________________________________________________________

activation_2 (Activation) (None, 1) 0

=================================================================

Total params: 1,269

Trainable params: 1,269

Non-trainable params: 0

_________________________________________________________________

1model_hybrid.fit(

2 trainX, trainY, epochs=3, batch_size=1, verbose=2, validation_split=0.1

3)

Epoch 1/3

1696/1696 - 19s - loss: 0.0026 - val_loss: 5.0679e-04

Epoch 2/3

1696/1696 - 12s - loss: 0.0011 - val_loss: 3.4934e-04

Epoch 3/3

1696/1696 - 16s - loss: 0.0010 - val_loss: 0.0018

<tensorflow.python.keras.callbacks.History at 0x7fb3c008b670>

1calculate_performance(model_hybrid)

Train RMSE: 0.05 RMSE

Test RMSE: 0.03 RMSE

1plt.figure(figsize=(10, 5))

2plot_prediction(model_hybrid)