Time series analysis#

Time series analysis on the rides columns (registered and casual) in the bike sharing dataset.

Importing libraries and packages#

1# Warnings

2import warnings

3

4# Mathematical operations and data manipulation

5import pandas as pd

6

7# Statistics

8from statsmodels.tsa.stattools import adfuller

9from statsmodels.tsa.seasonal import seasonal_decompose

10

11# Plotting

12import matplotlib.pyplot as plt

13

14warnings.filterwarnings("ignore")

15%matplotlib inline

---------------------------------------------------------------------------

ImportError Traceback (most recent call last)

Input In [1], in <cell line: 8>()

5 import pandas as pd

7 # Statistics

----> 8 from statsmodels.tsa.stattools import adfuller

9 from statsmodels.tsa.seasonal import seasonal_decompose

11 # Plotting

File ~/checkouts/readthedocs.org/user_builds/analysing/conda/latest/lib/python3.9/site-packages/statsmodels/tsa/stattools.py:19, in <module>

17 from scipy import stats

18 from scipy.interpolate import interp1d

---> 19 from scipy.signal import correlate

21 from statsmodels.regression.linear_model import OLS, yule_walker

22 from statsmodels.tools.sm_exceptions import (

23 CollinearityWarning,

24 InfeasibleTestError,

25 InterpolationWarning,

26 MissingDataError,

27 )

File ~/checkouts/readthedocs.org/user_builds/analysing/conda/latest/lib/python3.9/site-packages/scipy/signal/__init__.py:309, in <module>

1 """

2 =======================================

3 Signal processing (:mod:`scipy.signal`)

(...)

307

308 """

--> 309 from . import _sigtools, windows

310 from ._waveforms import *

311 from ._max_len_seq import max_len_seq

File ~/checkouts/readthedocs.org/user_builds/analysing/conda/latest/lib/python3.9/site-packages/scipy/signal/windows/__init__.py:41, in <module>

1 """

2 Window functions (:mod:`scipy.signal.windows`)

3 ==============================================

(...)

38

39 """

---> 41 from ._windows import *

43 # Deprecated namespaces, to be removed in v2.0.0

44 from . import windows

File ~/checkouts/readthedocs.org/user_builds/analysing/conda/latest/lib/python3.9/site-packages/scipy/signal/windows/_windows.py:7, in <module>

4 import warnings

6 import numpy as np

----> 7 from scipy import linalg, special, fft as sp_fft

9 __all__ = ['boxcar', 'triang', 'parzen', 'bohman', 'blackman', 'nuttall',

10 'blackmanharris', 'flattop', 'bartlett', 'hanning', 'barthann',

11 'hamming', 'kaiser', 'gaussian', 'general_cosine',

12 'general_gaussian', 'general_hamming', 'chebwin', 'cosine',

13 'hann', 'exponential', 'tukey', 'taylor', 'dpss', 'get_window']

16 def _len_guards(M):

File ~/checkouts/readthedocs.org/user_builds/analysing/conda/latest/lib/python3.9/site-packages/scipy/fft/__init__.py:91, in <module>

89 from ._realtransforms import dct, idct, dst, idst, dctn, idctn, dstn, idstn

90 from ._fftlog import fht, ifht, fhtoffset

---> 91 from ._helper import next_fast_len

92 from ._backend import (set_backend, skip_backend, set_global_backend,

93 register_backend)

94 from numpy.fft import fftfreq, rfftfreq, fftshift, ifftshift

File ~/checkouts/readthedocs.org/user_builds/analysing/conda/latest/lib/python3.9/site-packages/scipy/fft/_helper.py:3, in <module>

1 from functools import update_wrapper, lru_cache

----> 3 from ._pocketfft import helper as _helper

6 def next_fast_len(target, real=False):

7 """Find the next fast size of input data to ``fft``, for zero-padding, etc.

8

9 SciPy's FFT algorithms gain their speed by a recursive divide and conquer

(...)

59

60 """

File ~/checkouts/readthedocs.org/user_builds/analysing/conda/latest/lib/python3.9/site-packages/scipy/fft/_pocketfft/__init__.py:3, in <module>

1 """ FFT backend using pypocketfft """

----> 3 from .basic import *

4 from .realtransforms import *

5 from .helper import *

File ~/checkouts/readthedocs.org/user_builds/analysing/conda/latest/lib/python3.9/site-packages/scipy/fft/_pocketfft/basic.py:6, in <module>

4 import numpy as np

5 import functools

----> 6 from . import pypocketfft as pfft

7 from .helper import (_asfarray, _init_nd_shape_and_axes, _datacopied,

8 _fix_shape, _fix_shape_1d, _normalization,

9 _workers)

11 def c2c(forward, x, n=None, axis=-1, norm=None, overwrite_x=False,

12 workers=None, *, plan=None):

ImportError: /home/docs/checkouts/readthedocs.org/user_builds/analysing/conda/latest/lib/python3.9/site-packages/zmq/backend/cython/../../../../.././libstdc++.so.6: version `GLIBCXX_3.4.30' not found (required by /home/docs/checkouts/readthedocs.org/user_builds/analysing/conda/latest/lib/python3.9/site-packages/scipy/fft/_pocketfft/pypocketfft.cpython-39-x86_64-linux-gnu.so)

Set paths#

1# Path to datasets directory

2data_path = "./datasets"

3# Path to assets directory (for saving results to)

4assets_path = "./assets"

Loading dataset#

1# load hourly data

2dataset = pd.read_csv(f"{data_path}/preprocessed_hour.csv")

3dataset.head()

| instant | dteday | season | yr | mnth | hr | holiday | weekday | workingday | weathersit | temp | atemp | hum | windspeed | casual | registered | cnt | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 1 | 2011-01-01 | winter | 2011 | 1 | 0 | 0 | Saturday | 0 | clear | 0.24 | 0.2879 | 81.0 | 0.0 | 3 | 13 | 16 |

| 1 | 2 | 2011-01-01 | winter | 2011 | 1 | 1 | 0 | Saturday | 0 | clear | 0.22 | 0.2727 | 80.0 | 0.0 | 8 | 32 | 40 |

| 2 | 3 | 2011-01-01 | winter | 2011 | 1 | 2 | 0 | Saturday | 0 | clear | 0.22 | 0.2727 | 80.0 | 0.0 | 5 | 27 | 32 |

| 3 | 4 | 2011-01-01 | winter | 2011 | 1 | 3 | 0 | Saturday | 0 | clear | 0.24 | 0.2879 | 75.0 | 0.0 | 3 | 10 | 13 |

| 4 | 5 | 2011-01-01 | winter | 2011 | 1 | 4 | 0 | Saturday | 0 | clear | 0.24 | 0.2879 | 75.0 | 0.0 | 0 | 1 | 1 |

1# print some generic statistics about the data

2print(f"Shape of data: {dataset.shape}")

3print(f"Number of missing values in the data: {dataset.isnull().sum().sum()}")

4

5# get statistics on the numerical columns

6dataset.describe().T

Shape of data: (17379, 17)

Number of missing values in the data: 0

| count | mean | std | min | 25% | 50% | 75% | max | |

|---|---|---|---|---|---|---|---|---|

| instant | 17379.0 | 8690.000000 | 5017.029500 | 1.00 | 4345.5000 | 8690.0000 | 13034.5000 | 17379.0000 |

| yr | 17379.0 | 2011.502561 | 0.500008 | 2011.00 | 2011.0000 | 2012.0000 | 2012.0000 | 2012.0000 |

| mnth | 17379.0 | 6.537775 | 3.438776 | 1.00 | 4.0000 | 7.0000 | 10.0000 | 12.0000 |

| hr | 17379.0 | 11.546752 | 6.914405 | 0.00 | 6.0000 | 12.0000 | 18.0000 | 23.0000 |

| holiday | 17379.0 | 0.028770 | 0.167165 | 0.00 | 0.0000 | 0.0000 | 0.0000 | 1.0000 |

| workingday | 17379.0 | 0.682721 | 0.465431 | 0.00 | 0.0000 | 1.0000 | 1.0000 | 1.0000 |

| temp | 17379.0 | 0.496987 | 0.192556 | 0.02 | 0.3400 | 0.5000 | 0.6600 | 1.0000 |

| atemp | 17379.0 | 0.475775 | 0.171850 | 0.00 | 0.3333 | 0.4848 | 0.6212 | 1.0000 |

| hum | 17379.0 | 62.722884 | 19.292983 | 0.00 | 48.0000 | 63.0000 | 78.0000 | 100.0000 |

| windspeed | 17379.0 | 12.736540 | 8.196795 | 0.00 | 7.0015 | 12.9980 | 16.9979 | 56.9969 |

| casual | 17379.0 | 35.676218 | 49.305030 | 0.00 | 4.0000 | 17.0000 | 48.0000 | 367.0000 |

| registered | 17379.0 | 153.786869 | 151.357286 | 0.00 | 34.0000 | 115.0000 | 220.0000 | 886.0000 |

| cnt | 17379.0 | 189.463088 | 181.387599 | 1.00 | 40.0000 | 142.0000 | 281.0000 | 977.0000 |

Time series plots#

1# Daily rides

2daily_rides = dataset[["dteday", "registered", "casual"]]

3daily_rides = daily_rides.groupby("dteday").sum()

4

5# Convert index to DateTime object

6daily_rides.index = pd.to_datetime(daily_rides.index)

7daily_rides.head()

| registered | casual | |

|---|---|---|

| dteday | ||

| 2011-01-01 | 654 | 331 |

| 2011-01-02 | 670 | 131 |

| 2011-01-03 | 1229 | 120 |

| 2011-01-04 | 1454 | 108 |

| 2011-01-05 | 1518 | 82 |

1# Function for plotting rolling statistics and ADF test for time series

2

3

4def test_stationarity(ts, window=10, **kwargs):

5 # Dataframe for plotting

6 plot_data = pd.DataFrame(ts)

7 plot_data["rolling_mean"] = ts.rolling(window).mean()

8 plot_data["rolling_std"] = ts.rolling(window).std()

9 # Computing p-value of Dickey-Fuller test

10 p_val = adfuller(ts)[1]

11 ax = plot_data.plot(**kwargs)

12 ax.set_title(f"Dickey-Fuller p-value: {p_val:.3f}")

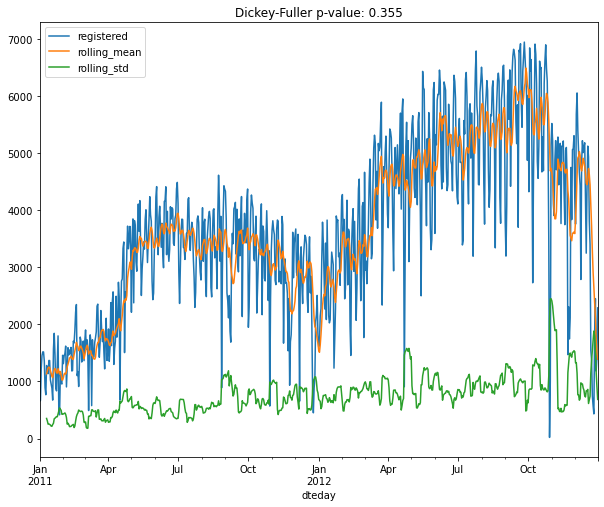

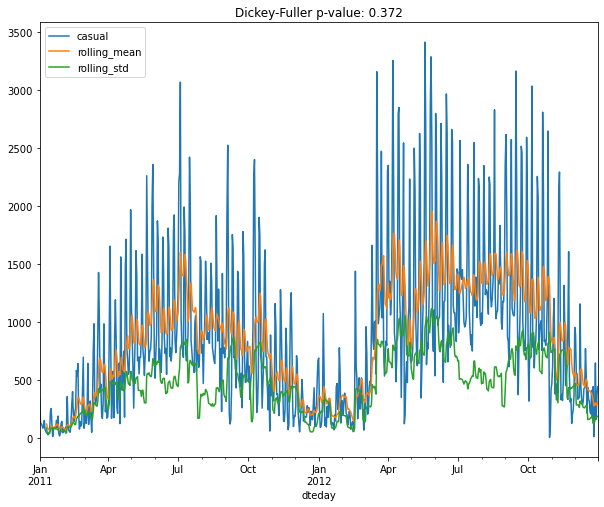

1plt.figure()

2test_stationarity(daily_rides["registered"], figsize=(10, 8))

3plt.savefig(f"{assets_path}/daily_registered_original.png", format="png")

<Figure size 432x288 with 0 Axes>

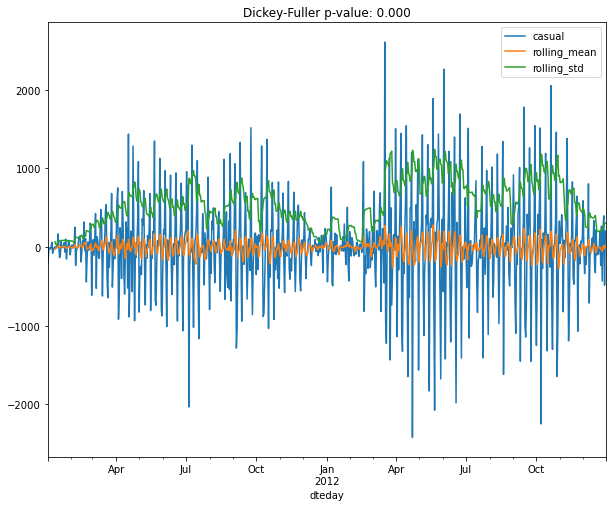

1plt.figure()

2test_stationarity(daily_rides["casual"], figsize=(10, 8))

3plt.savefig(f"{assets_path}/daily_casual_original.png", format="png")

<Figure size 432x288 with 0 Axes>

1# Making time series stationary

2registered = daily_rides["registered"]

3registered_ma = registered.rolling(10).mean()

4registered_ma_diff = registered - registered_ma

5registered_ma_diff.dropna(inplace=True)

6

7casual = daily_rides["casual"]

8casual_ma = casual.rolling(10).mean()

9casual_ma_diff = casual - casual_ma

10casual_ma_diff.dropna(inplace=True)

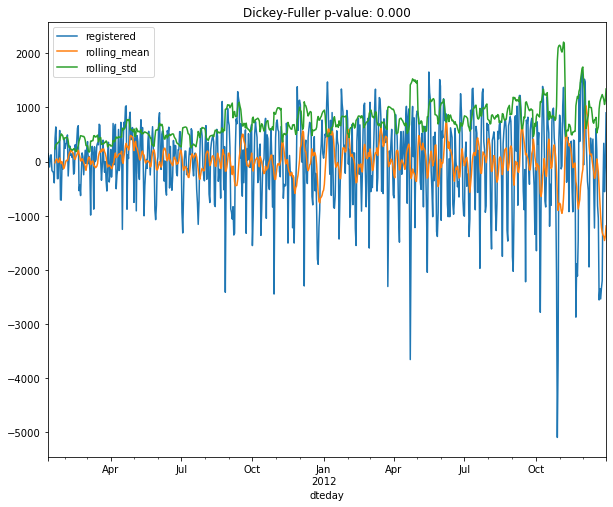

1plt.figure()

2test_stationarity(registered_ma_diff, figsize=(10, 8))

3plt.savefig(f"{assets_path}/daily_registered_ma_diff.png", format="png")

<Figure size 432x288 with 0 Axes>

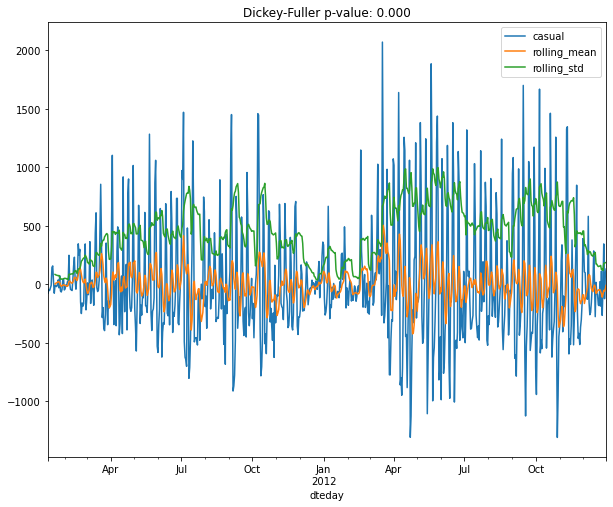

1plt.figure()

2test_stationarity(casual_ma_diff, figsize=(10, 8))

3plt.savefig(f"{assets_path}/daily_casual_ma_diff.png", format="png")

<Figure size 432x288 with 0 Axes>

1# Subtracting last value

2registered = daily_rides["registered"]

3registered_diff = registered - registered.shift()

4registered_diff.dropna(inplace=True)

5

6casual = daily_rides["casual"]

7casual_diff = casual - casual.shift()

8casual_diff.dropna(inplace=True)

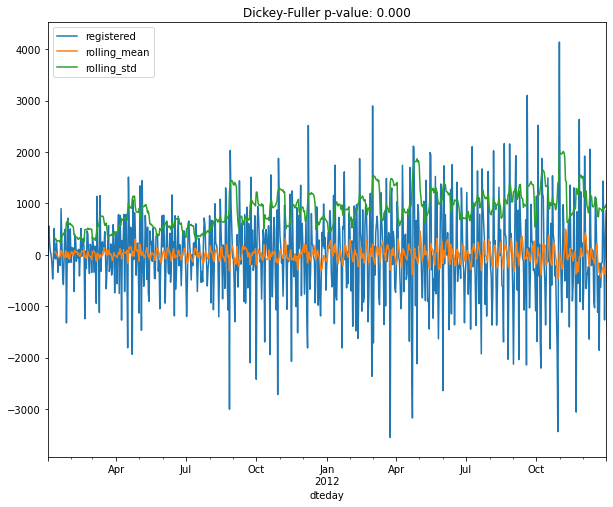

1plt.figure()

2test_stationarity(registered_diff, figsize=(10, 8))

3plt.savefig(f"{assets_path}/daily_registered_diff.png", format="png")

<Figure size 432x288 with 0 Axes>

1plt.figure()

2test_stationarity(casual_diff, figsize=(10, 8))

3plt.savefig(f"{assets_path}/daily_casual_diff.png", format="png")

<Figure size 432x288 with 0 Axes>

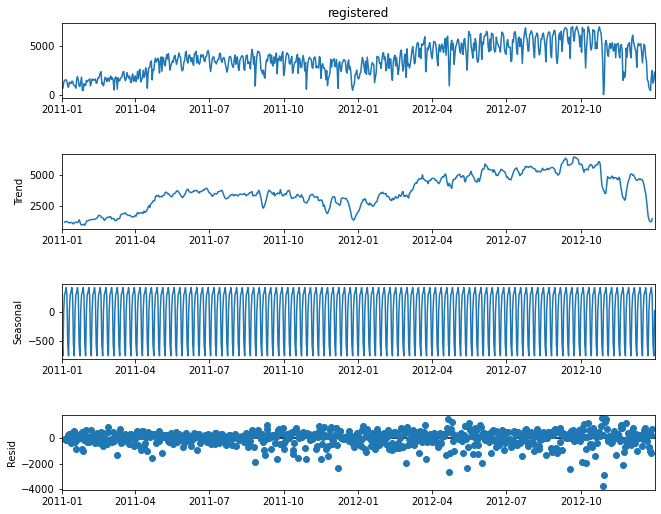

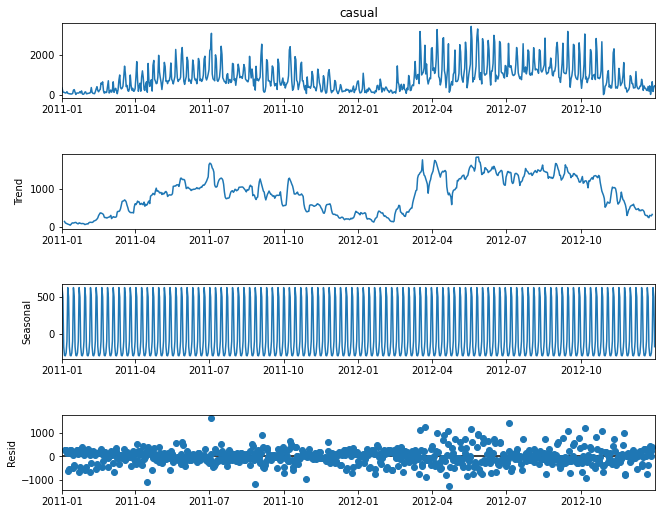

Time series decomposition in trend, seasonality, and residual components#

1registered_decomposition = seasonal_decompose(daily_rides["registered"])

2casual_decomposition = seasonal_decompose(daily_rides["casual"])

1# Plotting decompositions

2registered_plot = registered_decomposition.plot()

3registered_plot.set_size_inches(10, 8)

4

5casual_plot = casual_decomposition.plot()

6casual_plot.set_size_inches(10, 8)

7

8registered_plot.savefig(

9 f"{assets_path}/registered_decomposition.png", format="png"

10)

11casual_plot.savefig(f"{assets_path}/casual_decomposition.png", format="png")

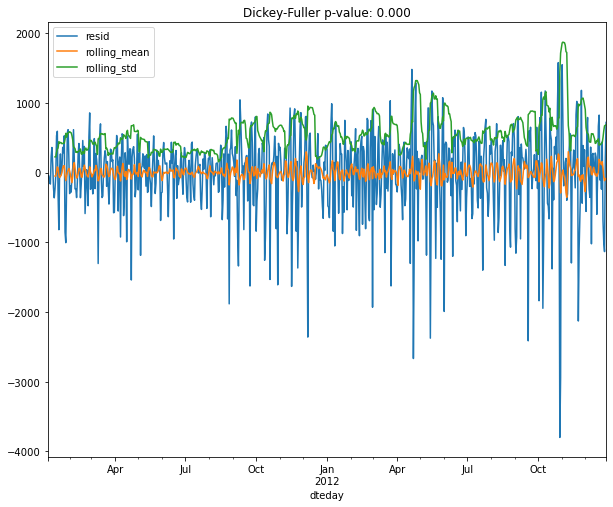

1# Testing residuals for stationarity

2plt.figure()

3test_stationarity(registered_decomposition.resid.dropna(), figsize=(10, 8))

4plt.savefig(f"{assets_path}/registered_resid.png", format="png")

<Figure size 432x288 with 0 Axes>